Everyone is asking the wrong question about AI.

The question isn’t:

“How do we use AI in our organization?”

The real question is:

“Is our operating model capable of turning AI into value?”

Because here’s the uncomfortable truth:

Most enterprises won’t fail at AI because of technology.

They will fail because their system is structurally incapable of absorbing it.

The Illusion: AI as a Tool

Right now, AI initiatives look like this:

- A task force is formed.

- A strategy deck is created.

- A few pilots are launched.

- Some productivity gains are reported.

- Nothing scales.

It feels innovative.

But structurally, nothing changed.

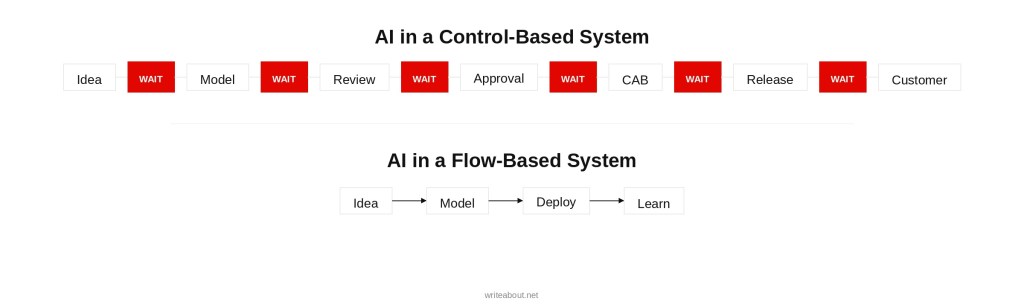

AI gets installed into a system optimized for:

- Control

- Approvals

- Predictability

- Siloed ownership

And then leaders wonder why “AI transformation” stalls.

AI doesn’t fail.

The operating model does.

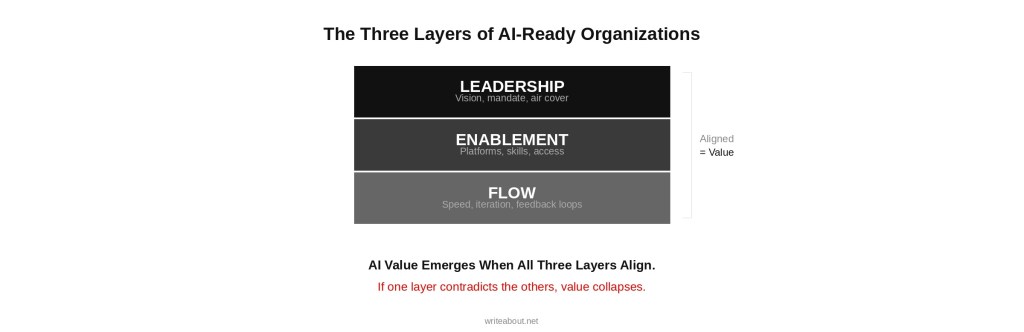

The Structural Reality: Three Layers Must Align

If AI is going to generate real business value, three layers must work together.

This is the foundation of the Three-Layer Transformation Model.

Layer 1: Flow – Where Value Moves

AI only creates value when it:

- Ships into production

- Influences decisions

- Changes customer outcomes

- Is iterated based on feedback

That requires:

- Fast deployment

- Short feedback loops

- Ownership at team level

- Observability and monitoring

- Continuous learning cycles

If it takes 12–18 weeks to deploy a model…

If every change requires committee approval…

If incidents create fear instead of learning…

AI becomes a slide deck — not a capability.

Layer 2: Enablement — Where Friction Is Removed

AI introduces:

- Data governance requirements

- Compliance obligations

- Security concerns

- Model risk management

- Infrastructure complexity

If governance is manual…

If compliance requires documentation rituals…

If environments require tickets…

If security acts as gatekeeper…

AI experiments suffocate.

Enablement means:

- Compliance-as-code

- Guardrails instead of gates

- Pre-approved patterns

- Self-service environments

- Platform capabilities teams can use independently

AI requires scaffolding.

Without enablement, experimentation turns into bureaucracy.

![Left: Gate Model

Team → [Approval Gate] → [Approval Gate] → [Approval Gate]

Right: Guardrail Model

Team inside a lane with boundaries but no stops.](https://writeabout.net/wp-content/uploads/2026/03/guardrails-vs-gates.jpg?w=1024)

Layer 3: Leadership — Where Risk Is Interpreted

This is the most overlooked layer.

AI introduces uncertainty.

Leaders must decide:

- Do we punish mistakes?

- Do we allow small failures?

- Do we demand certainty before experiments?

- Do we escalate every decision?

If leadership says:

“We want innovation.”

But behaves like:

“Nothing must go wrong.”

Teams won’t experiment.

They’ll wait.

Escalate.

Over-document.

Play safe.

AI requires:

- Psychological safety

- Transparency about trade-offs

- Incentives aligned with learning

- Trust in teams

Without leadership evolution, AI becomes theater.

The Pattern I’m Seeing in Enterprises

Right now, most companies are trying to improve only one layer:

They invest in Flow:

- Copilot licenses

- Model experimentation

- New tools

But:

- Enablement still requires manual approval.

- Leadership still rewards control.

Result?

Flow improvements create friction.

Enablement becomes bureaucracy.

Leadership unintentionally blocks progress.

Exactly the same structural contradiction that kills Agile transformations.

Only now with AI.

The Hard Truth

You cannot “install AI” into a system optimized for predictability and approval.

You must align:

- How work flows

- How friction is removed

- How decisions are shaped

AI amplifies whatever system it enters.

If your system is slow, political, and risk-averse…

AI will amplify slowness, politics, and fear.

If your system is adaptive, empowered, and learning-oriented…

AI will amplify intelligence.

A Diagnostic for Leaders

If your AI initiative feels stuck, ask:

- Is this a Flow constraint? (Deployment speed, feedback cycles, ownership)

- Is this an Enablement constraint? (Governance friction, manual compliance, environment access)

- Is this a Leadership constraint? (Fear, escalation, misaligned incentives)

Most executives focus on the first.

The real constraint is often the third.

AI Is Not a Technology Problem

It is an operating model test.

It reveals:

- Where control overrides autonomy

- Where governance overrides enablement

- Where leadership debt overrides learning

AI doesn’t break organizations.

It exposes them.

If you want AI to create real business value, don’t start with tools.

Start with structure.