TL;DR: AI makes developers feel 20% faster while actually being 19% slower (METR study). The problem isn’t AI—it’s that we measure output (code written) instead of outcomes (value delivered). Use DORA metrics and value stream thinking to set the right baseline. AI amplifies whatever you already have: strong foundations get stronger, weak ones crumble faster. The future developer is a “commander” directing AI agents, not a coder typing syntax.

Everyone is talking about the AI productivity revolution in software development. Vendors claim 55% faster task completion. Developers self-report 30% gains. GitHub says 41% of all code written in 2025 is AI-generated. And yet — most organizations see zero measurable improvement in delivery outcomes.

Welcome to the AI Productivity Paradox. And I’m here to tell you: the paradox isn’t about AI. It’s about how catastrophically wrong we’ve been measuring developer productivity all along.

I’ve spent years helping organizations improve their software delivery — and I’ve seen this movie before. Every time a new technology promises a step-change in productivity (cloud, containers, microservices, low-code), the same pattern repeats: early hype, disappointing results, and then — finally — gains for the organizations that understood what “productivity” actually means. AI is following the same script, just at hyperspeed.

You’re Measuring the Assembly Line. Software Isn’t One.

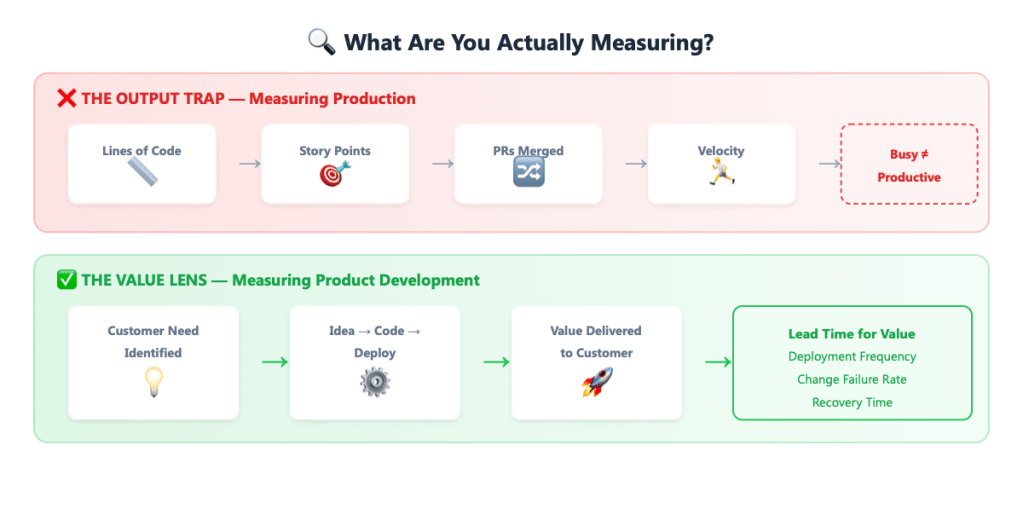

When I wrote Accelerate DevOps with GitHub, I opened with a chapter called “Metrics that Matter” for a reason. It’s the foundation everything else breaks down without. And the core insight is deceptively simple: software development is product development, not production.

In a factory, productivity means producing more widgets per hour. Measure output, optimize throughput, celebrate velocity. Simple. But software isn’t widgets. Writing more code doesn’t create more value — it often destroys it by adding complexity, technical debt, and maintenance burden.

Yet most organizations still measure developers like factory workers: story points completed, lines of code written, PRs merged per sprint, tickets closed. They’re measuring busyness, not value. And now they’re confused when AI makes developers “busier” without making the business better off.

Here’s the uncomfortable truth that many leaders still haven’t internalized: in product development, doing more is often worse than doing less. Every feature you add is a feature you have to maintain, test, secure, and support. The best product teams are ruthlessly focused on delivering the smallest thing that creates the most value — and then learning from it. That’s the opposite of “maximize output.”

This is the equivalent of measuring a car designer’s productivity by how many blueprints they draw per day. You wouldn’t do that. You’d measure how quickly a great car gets from concept to customer. That’s value stream thinking — and it’s the only lens that makes developer productivity intelligible.

The Value Stream: Where Productivity Actually Lives

In Accelerate DevOps with GitHub, I build on the research from the DORA team (now part of Google Cloud) and the groundbreaking book Accelerate by Nicole Forsgren, Jez Humble, and Gene Kim. Their insight — validated across tens of thousands of organizations — is that you measure software delivery performance through outcomes, not output:

- Deployment Frequency — How often do you deliver value to users?

- Lead Time for Changes — How fast does an idea become running software?

- Change Failure Rate — How often does a change break things?

- Failed Deployment Recovery Time — How quickly do you bounce back?

These metrics aren’t about how much code you write. They’re about how effectively your organization converts ideas into customer value. That’s the value stream — the entire journey from “we should build this” to “customers are using it.”

Notice what’s absent from this list: story points, velocity, lines of code, or any measure of individual developer output. That’s intentional. The research is unambiguous: output metrics don’t correlate with organizational performance. Teams that optimize for velocity often sacrifice quality, create rework loops, and end up slower at delivering value — even though their dashboards look impressive.

And here’s what makes this critical in the age of AI: if you’re measuring the wrong thing, AI will simply help you do the wrong thing faster.

The AI Productivity Paradox Is a Measurement Paradox

The 2025 DORA Report — aptly titled “State of AI-Assisted Software Development” — confirmed what many of us suspected. After surveying nearly 5,000 technology professionals, the headline finding was stark: AI adoption positively correlates with throughput but also with higher instability. Teams ship faster, but with more change failures, more rework, and longer recovery times.

Meanwhile, a landmark randomized controlled trial by METR delivered an even more provocative finding. Sixteen experienced open-source developers completed 246 tasks in mature codebases they’d worked in for an average of five years. Before starting, they predicted AI tools would save them 24% of the time. After the study? They estimated a 20% saving. But the actual measured result: AI increased task completion time by 19%. Developers felt faster while actually being slower.

Think about that for a moment. The subjective experience of productivity completely diverged from reality. This is what happens when you don’t have rigorous outcome metrics — you mistake the feeling of flow for the delivery of value.

The paradox resolves once you adopt value stream thinking. AI absolutely accelerates coding — the typing-of-characters-into-editors part. But coding was never the bottleneck. In most organizations, the bottleneck is everything around the code: understanding requirements, making design decisions, reviewing changes, coordinating across teams, deploying safely, and learning from failures.

Research from Faros AI, analyzing telemetry from over 10,000 developers across 1,255 teams, found that AI-augmented developers write more code and complete more tasks — but the code is bigger and buggier, shifting the bottleneck to review. They’re producing more output while potentially degrading the outcome.

GitClear’s analysis of code quality trends paints a similar picture: “code churn” — code that’s discarded within two weeks of being written — has been rising sharply since the widespread adoption of AI coding assistants. We’re generating more code that nobody needs. That’s not productivity. That’s waste dressed in a productivity costume.

The 2025 Stack Overflow Developer Survey adds another dimension: overall trust in AI tools has dropped to 60%, down from over 70% in prior years. Only 3% of developers say they “highly trust” AI-generated code. Developers are using the tools more while trusting them less — a tension that suggests we’re in a phase of experimentation, not mastery.

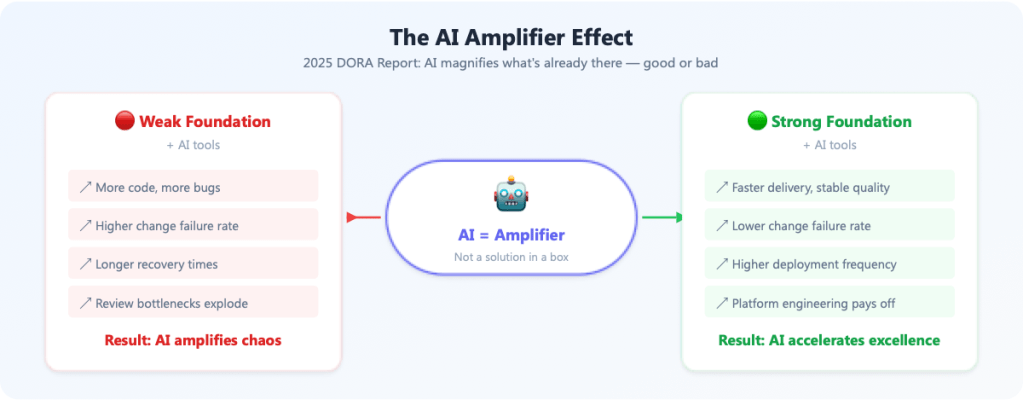

AI Is an Amplifier, Not a Solution

The 2025 DORA report nailed it with one phrase: “AI is an amplifier.” It magnifies whatever’s already there. Strong engineering culture, good architecture, solid CI/CD, clear value streams? AI makes all of that better. Weak foundations, unclear ownership, poor testing discipline, no deployment automation? AI pours gasoline on the fire.

This is why the organizations that will benefit most from AI coding tools are, somewhat ironically, the ones that least need saving. They’ve already invested in the fundamentals I describe in Accelerate DevOps with GitHub: everything from trunk-based development and continuous integration to team topologies and inner source culture.

The 2025 DORA findings also revealed that platform engineering is the key enabler. A full 90% of organizations now have internal platforms — and where those platforms are high-quality, AI’s benefits compound. Where they’re low-quality, AI’s impact is negligible. Your developer experience is your AI strategy.

From Coder to Commander

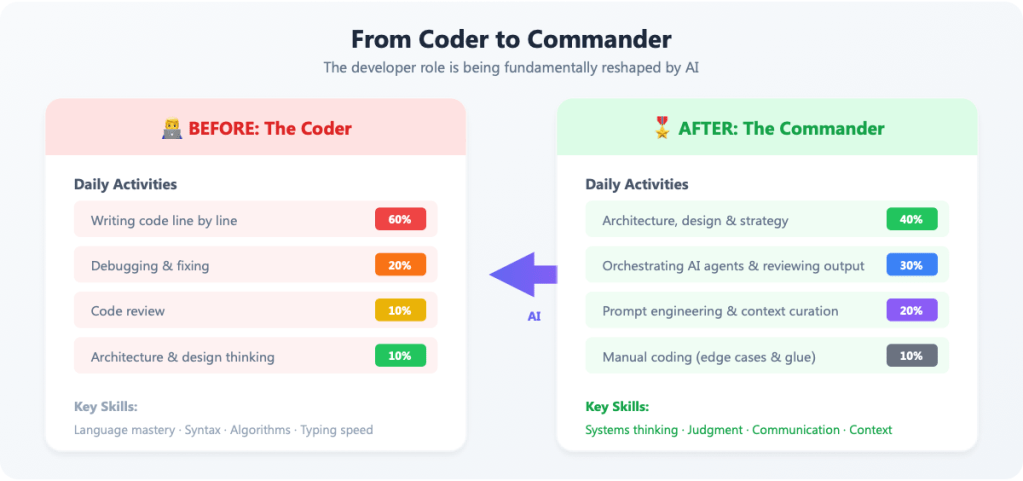

Here’s where it gets really interesting — and, frankly, a bit unsettling for the industry. AI isn’t just a tool. It’s reshaping the very identity of what a developer is.

We’re witnessing a shift from coders to commanders. The traditional developer spent 60%+ of their time writing and debugging code — line by line, character by character. Syntax mastery, language fluency, and typing speed were prized. The job was essentially artisanal: hand-crafting software one function at a time.

The AI-era developer is something different. They’re an orchestrator — directing AI agents, curating context, reviewing generated output, making architectural decisions, and ensuring the system solves the right problem. The daily workflow is shifting from writing code to directing its creation.

By late 2025, AI coding agents had progressed from autocomplete on steroids to semi-autonomous systems capable of implementing entire features over multiple hours. In 2026, we’re seeing agents that can work for days, navigating complex codebases, writing tests, and coordinating with other agents. The engineer’s job is increasingly about providing intent and judgment — the things AI still can’t do well — while delegating the mechanical translation of intent into code.

This has profound implications across three dimensions:

1. Developer Skills

The skills that made a great developer in 2020 aren’t the same skills that will define excellence in 2026. Language-specific syntax knowledge? AI has that covered. What it doesn’t have: systems thinking, the judgment to know when generated code is subtly wrong, the ability to decompose a complex problem into the right abstractions, the communication skills to frame context for an AI agent. We’re moving from valuing implementation fluency to valuing design wisdom.

2. Team Structure

If individual coding speed is no longer the constraint, the bottleneck shifts to coordination, architecture, and review. Teams need fewer “hands on keyboards” and more “eyes on outcomes.” The team topology thinking from Matthew Skelton and Manuel Pais — stream-aligned teams, enabling teams, platform teams — becomes even more critical. In an AI-amplified world, Conway’s Law still rules: your software will mirror your organizational communication structure, and AI won’t fix a bad org chart.

3. Organizational Structure

Organizations will need to rethink how they value and develop talent. Career ladders built on “code output” become meaningless. Performance reviews anchored in velocity or story points were always flawed — now they’re actively harmful. The developers who thrive will be those who can think in systems, communicate across boundaries, and make good decisions under uncertainty. That’s a leadership skillset, not a coding one.

This also has implications for hiring. If AI handles the routine coding, the junior developer on-ramp changes fundamentally. Traditionally, juniors learned by writing lots of simple code and gradually taking on complexity. In the AI era, juniors risk skipping the learning phase entirely — getting AI to write code they don’t fully understand. Organizations need to deliberately design learning paths that build deep understanding, not just AI-assisted output. The commander who never served as a soldier is a dangerous thing.

And let’s talk about the elephant in the room: team size. If each developer can orchestrate AI agents to do the work of three, does that mean you need a third of the developers? Not necessarily. History shows that productivity gains in software tend to be absorbed by scope expansion — teams tackle harder problems, build more ambitious products, and address previously ignored technical debt. The same happened with DevOps: automation didn’t eliminate ops teams, it elevated them. But the composition of teams will change. Expect smaller, more senior teams with broader scope, supported by powerful platforms and AI-native toolchains.

Setting the Right Baseline

So here’s the practical question: if you want to see AI’s real impact on your software development productivity, how do you set the baseline correctly?

Step 1: Measure the value stream, not the workstation. Track your DORA metrics. Understand your lead time from commit to production. Know your deployment frequency and failure rates. These are your baselines. If you don’t have them, you have no way to evaluate whether AI is helping.

Step 2: Map where time actually goes. Use the SPACE framework (Satisfaction, Performance, Activity, Communication, Efficiency) to understand developer experience holistically. You’ll likely discover that coding time is less than 30% of the total effort. AI that speeds up 30% of the work by 50% gives you a 15% total improvement — assuming nothing else degrades. And as the METR study showed, that’s a big assumption.

Step 3: Watch the system, not the individual. An individual developer might generate 3x more code with AI. But if review queues triple, deployment failures spike, and architectural coherence degrades, the system is slower. Always measure at the value stream level.

Step 4: Invest in the amplified fundamentals. Before chasing AI productivity gains, ensure your engineering platform is solid, your CI/CD pipeline is fast and reliable, your team topologies are clear, and your value streams are understood. AI will amplify whatever you have — make sure it’s worth amplifying.

Step 5: Redefine “developer productivity” entirely. Stop asking “how much code did we produce?” and start asking “how quickly and safely did we deliver value to customers?” If your organization can answer that question — with data — you have a baseline worth measuring against. If you can’t, no amount of AI tooling will tell you whether you’re getting better.

I sometimes describe this to executives as the difference between measuring a kitchen’s productivity by how many plates are stacked at the end of the night versus how many happy, returning customers walked out the door. Both are easy to count. Only one matters.

The Bottom Line

Here’s what I keep telling the teams I work with: the AI coding revolution is real, but it’s not the revolution most people think it is. It’s not a revolution in coding speed. It’s a revolution in what it means to be a software professional — and, by extension, in how organizations need to think about software delivery.

The productivity gains aren’t hiding in faster typing or more PRs. They’re hiding in the same place software productivity has always lived: in the ability to deliver the right thing to the right customer at the right time — safely, sustainably, and repeatedly.

If your baseline is story points, you’ll measure the wrong thing with greater precision. If your baseline is the value stream, you’ll see where AI genuinely helps — and where it’s just noise dressed up as progress.

The developers who will thrive aren’t the ones who type the fastest. They’re the ones who think the clearest. In a world where AI can write any code, the most valuable skill is knowing what code should exist.

And that, as they say, was never about the keystrokes.

Sources & Further Reading:

- – 2025 DORA Report: State of AI-Assisted Software Development — Google/DORA

- – METR Study: Measuring the Impact of Early-2025 AI on Experienced OS Developer Productivity — METR

- – The AI Productivity Paradox Research Report — Faros AI

- – State of Developer Ecosystem 2025 — JetBrains

- – AI Coding: Not Everyone Is Convinced — MIT Technology Review

- – Agentic Coding in 2026: Trends — Efficient Coder

- – DORA 2025: Faster, But Are We Any Better? — DevOps.com

- – 2025 Stack Overflow Developer Survey: AI — Stack Overflow

- – Forsgren, N., Humble, J., & Kim, G. — Accelerate: The Science of Lean Software and DevOps

- – Kaufmann, M. — Accelerate DevOps with GitHub (Packt Publishing)

One thought on “Stop Counting Keystrokes: Why AI Isn’t Making Developers More Productive (Yet) — And How to Fix Your Measurement”